If your website traffic is up but results feel off, you’re not imagining it.

You publish content. You optimize SEO. You track performance.

But engagement drops, conversion rates don’t move, and marketing decisions feel increasingly uncertain.

What’s changed isn’t your effort — it’s who’s visiting your site.

Today, a significant share of website traffic comes from bots, AI systems, and automated crawlers that analyze content rather than use it. For modern digital teams, traffic no longer represents people alone. It represents a mix of humans, machines, and systems evaluating your site for different purposes.

Understanding that shift is essential to making sense of analytics accuracy, SEO performance, and growth strategy outcomes in today’s web environment.

What Is Bot Traffic?

Bot traffic refers to any non-human activity on a website or application generated by automated software programs rather than real users.

These programs, commonly called bots, are designed to perform specific tasks, such as:

- Crawling and indexing content

- Monitoring website performance

- Collecting data

- Training AI models

- Detecting changes or anomalies

Bot traffic is often framed negatively, but that view is incomplete. Bots are not inherently good or bad. Their value depends entirely on their purpose and how they interact with your site.

Search engines rely on bots to function. Digital assistants depend on automated crawlers. Without bots, modern web discovery and AI-driven retrieval would not exist.

The real challenge for businesses is not eliminating bots — it is

understanding how bot activity affects traffic quality, analytics interpretation, and performance measurement.

What Is Bot Traffic on a Website?

On a website, bot traffic appears as sessions that don’t behave like human users.

Bots do not scroll, pause, or evaluate content contextually. Instead, they request pages, extract information, and move on quickly. This behavior creates measurable distortions.

Bot traffic can:

- Increase pageviews without improving engagement

- Distort bounce rates and session duration

- Submit forms with fake or unusable data

- Consume server resources

- Crawl pages that real users rarely visit

For website owners, this creates a dangerous illusion. Traffic appears healthy, but conversions stall. Optimization efforts feel ineffective. Attribution becomes unreliable.

The key realization is this: not all traffic is meant to convert. Some traffic exists to evaluate your site, not to use it. Treating both the same leads to flawed conclusions and poor optimization decisions.

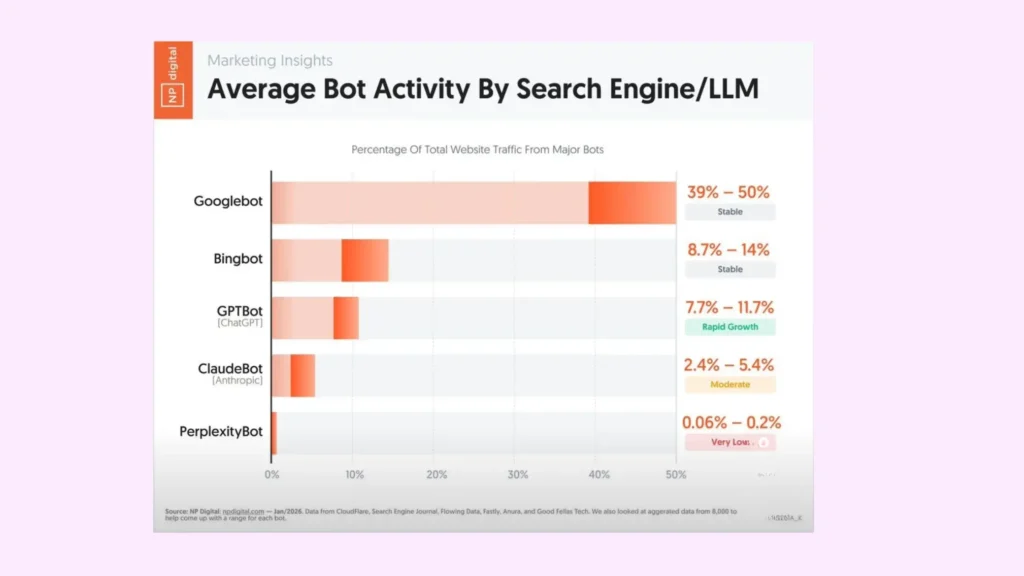

Bot Activity by Search Engines

Search engine bots are the most familiar and widely accepted form of bot traffic.

How Search Engine Bots Work

Search engines use automated crawlers to:

- Discover new pages

- Understand content relevance

- Evaluate site structure

- Determine ranking eligibility

Common examples include Googlebot, Bingbot, and regional search crawlers.

These bots systematically scan websites, follow internal links, read content, and assess technical signals such as load speed, mobile friendliness, and metadata quality.

For most businesses, this bot traffic is essential. Without it, your site cannot achieve organic visibility. However, it is important to recognize that search engine bot activity supports discoverability — not demand generation.

Bot Activity by LLMs and AI Systems

A newer and increasingly influential category of bot traffic comes from AI systems and Large Language Models (LLMs).

Unlike traditional search bots, LLM bots are not designed to rank pages. They are designed to understand, extract, and reuse information.

What LLM Bots Do

LLM-related bots crawl websites to:

- Extract factual information

- Learn definitions and explanations

- Build contextual knowledge

- Support AI-generated answers and summaries

When users ask questions inside AI tools, those answers may be generated using your content — often without sending traffic back to your site.

This marks a fundamental shift in digital performance measurement: your content can create value without generating visits.

Key Types of AI and Search Bots

Not all bots operate with the same intent. From a growth and analytics perspective, categorization matters.

- Search Engine Crawlers

- Influence indexing, rankings, and organic visibility.

- AI Training and Retrieval Bots

- Power LLM responses and AI-driven discovery.

- Monitoring Bots

- Track uptime, performance, pricing, or content changes.

- Scraping and Data Collection Bots

- Extract information, sometimes without permission or attribution.

- Malicious Bots

- Engage in spam, fraud, or infrastructure attacks.

Each category affects traffic quality, analytics accuracy, SEO efficiency, and infrastructure cost differently. Treating them all the same is a strategic mistake.

Differences Between Search Bots and LLM Bot Activity

Understanding this distinction is critical for modern SEO and content strategy.

Search Engine Bots

- Purpose: Index and rank pages

- Outcome: Click-based traffic

- Focus: Keywords, links, technical SEO

- Value: Search visibility

LLM Bots

- Purpose: Generate answers and insights

- Outcome: Zero-click exposure

- Focus: Clarity, authority, factual accuracy

- Value: AI citations and brand trust

Search bots reward optimization.

LLM bots reward comprehension.

This is why content that ranks well is not always content that AI systems rely on — and why modern strategies must account for both.

How Bot Traffic Can Be Identified

Most businesses experience bot traffic long before they recognize it.

Marketers typically detect bot activity through analytics anomalies and performance inconsistencies, such as:

- High pageviews without conversion lift

- Sudden bounce rate spikes

- Extremely short or unnaturally long sessions

- Junk form submissions

- Traffic surges from irrelevant locations

Individually, these signals are inconclusive. Together, they point to declining analytics reliability and to reduced clarity in performance signals.

Impact on Websites and SEO

Analytics Distortion

When bots dominate sessions, core metrics become unreliable. Pageviews, bounce rate, and session duration lose their ability to reflect real behavior.

This makes it difficult to:

- Evaluate content performance accurately

- Run meaningful A/B tests

- Optimize conversion funnels

- Make confident growth decisions

Decisions based on noisy data rarely produce predictable outcomes.

Crawl Budget and Indexing Efficiency

Search engines allocate limited crawl budgets. Excessive or inefficient crawling can delay the indexing of high-value pages.

Poor structure, duplicate content, or uncontrolled bot access reduces SEO efficiency — even when content quality is strong.

Changing SEO Economics

Traditional SEO assumed rankings produced traffic. AI-driven discovery breaks that assumption.

Today, visibility may occur without clicks. Authority matters more than impressions. Being referenced can matter more than being visited.

SEO still matters — but its role has shifted from traffic generation to trust and discoverability infrastructure.

How Bot Traffic Can Hurt Performance

High-volume bot activity can strain infrastructure.

Even outside of deliberate attacks, aggressive crawling can:

- Increase bandwidth costs

- Slow page load times

- Degrade real user experience

Because these issues appear intermittently, they often go undiagnosed without proper monitoring and traffic segmentation.

Business Risks of Unmanaged Bot Traffic

For some business models, unmanaged bot traffic creates direct financial risk.

- Advertising-driven sites face click fraud and account penalties

- E-commerce platforms encounter inventory hoarding bots

- Content-driven businesses face AI reuse without traffic or monetization

In all cases, traffic volume masks declining traffic quality and the integrity of revenue signals.

Managing Bot Traffic Effectively

Managing bot traffic requires balance, not brute force.

Effective strategies include:

- Rate limiting abusive request patterns

- Behavioral analysis to distinguish humans from bots

- Monitoring crawl behavior and anomalies

- Separating bot and human analytics views

The goal is not to block all bots — it is to ensure that bot activity aligns with business performance objectives.

High-Intent Traffic in a Bot-Driven Web

As bot activity increases, high-intent traffic becomes the clearest indicator of real demand.

High-intent visitors arrive with purpose — to evaluate, compare, or act. They engage meaningfully, navigate intentionally, and interact with decision-stage content.

In a bot-heavy environment, these users may represent a smaller portion of total sessions, but they drive the majority of outcomes.

Focusing on high-intent traffic allows teams to:

- Measure true performance

- Identify reliable growth signals

- Optimize for outcomes instead of volume

In today’s web, traffic quality matters more than traffic quantity

Why This Matters for Modern Growth Teams

Bot traffic is now part of the digital ecosystem.

Teams that adapt gain:

- Cleaner analytics

- Better performance measurement

- Stronger SEO foundations

- Clearer growth signals

Teams that don’t risk optimizing for metrics that no longer reflect reality.

Final Thoughts: Adapting to a Bot-First Web

Website traffic hasn’t disappeared. It has evolved.

Bots now evaluate, interpret, and redistribute content at scale. Some visibility never shows up as a session. Some shapes perception before a user ever clicks.

The businesses that win are the ones that understand this shift — and build for it.

How Genbe Helps

As a leading digital marketing company in Visakhapatnam, Genbe, we help brands navigate this new landscape by aligning traffic quality, analytics accuracy, SEO strategy, and content performance with how both humans and machines experience the web.

From improving analytics clarity to building authority-driven content strategies optimized for search engines and AI systems, Genbe focuses on decision-ready data and measurable outcomes — not vanity metrics.

If your traffic looks healthy but growth feels inconsistent, it may be time to look beyond the numbers and understand what’s really visiting your site.

That’s where Genbe comes in.

Frequently Asked Questions (FAQs)

What is bot traffic?

Bot traffic is non-human activity generated by automated programs that crawl, analyze, monitor, or collect data from websites. It can be useful or harmful depending on intent.

Are all bots bad for a website?

No. Search engines and AI bots are essential for visibility and discovery. Problems arise only from malicious or excessive bot activity.

How can I tell if my website is getting bot traffic?

Common signs include traffic spikes without conversions, unusually high bounce rates, odd session durations, fake form submissions, and traffic from unexpected regions.

Can bot traffic affect SEO?

Yes. Excessive bot activity can waste crawl budget, delay indexing, slow site performance, and distort SEO data — while good bots influence rankings and visibility.

How do AI and LLM bots change website visibility?

AI bots often surface your content directly in AI answers instead of sending clicks, making authority and clarity more important than raw traffic.

What’s the difference between search engine bots and AI bots?

Search bots rank pages to drive clicks. AI bots extract and understand content to generate answers, often resulting in zero-click exposure.